Alan Turing

(Redirected from Turing, Alan)

Alan Mathison Turing (23 June 1912 – 7 June 1954) was an English mathematician, computer scientist, logician, cryptanalyst, philosopher, and theoretical biologist. Turing was highly influential in the development of theoretical computer science, providing a formalisation of the concepts of algorithm and computation with the Turing machine, which can be considered a model of a general-purpose computer. Turing is widely considered to be the father of theoretical computer science and artificial intelligence.

Quotes[edit]

- Mathematical reasoning may be regarded rather schematically as the exercise of a combination of two facilities, which we may call intuition and ingenuity. The activity of the intuition consists in making spontaneous judgements which are not the result of conscious trains of reasoning... The exercise of ingenuity in mathematics consists in aiding the intuition through suitable arrangements of propositions, and perhaps geometrical figures or drawings.

- "Systems of Logic Based on Ordinals," section 11: The purpose of ordinal logics (1938), published in Proceedings of the London Mathematical Society, series 2, vol. 45 (1939)

- In a footnote to the first sentence, Turing added: "We are leaving out of account that most important faculty which distinguishes topics of interest from others; in fact, we are regarding the function of the mathematician as simply to determine the truth or falsity of propositions."

- Instruction tables will have to be made up by mathematicians with computing experience and perhaps a certain puzzle-solving ability. There need be no real danger of it ever becoming a drudge, for any processes that are quite mechanical may be turned over to the machine itself.

- "Proposed Electronic Calculator" (1946), a report for National Physical Laboratory, Teddington; published in A. M. Turing's ACE Report of 1946 and Other Papers (1986), edited by B. E. Carpenter and R. W. Doran, and in The Collected Works of A. M. Turing (1992), edited by D. C. Ince, Vol. 3.

- A man provided with paper, pencil, and rubber, and subject to strict discipline, is in effect a universal machine.

- "Intelligent Machinery: A Report by A. M. Turing," (Summer 1948), submitted to the National Physical Laboratory (1948) and published in Key Papers: Cybernetics, ed. C. R. Evans and A. D. J. Robertson (1968) and, in variant form, in Machine Intelligence 5, ed. B. Meltzer and D. Michie (1969).

- There is a remarkably close parallel between the problems of the physicist and those of the cryptographer. The system on which a message is enciphered corresponds to the laws of the universe, the intercepted messages to the evidence available, the keys for a day or a message to important constants which have to be determined. The correspondence is very close, but the subject matter of cryptography is very easily dealt with by discrete machinery, physics not so easily.

- "Intelligent Machinery." This passage was one of the epigraphs to Cryptonomicon, the influential novel by Neal Stephenson, in which Turing was a fictionalized character. It shared a page with a quote from Imelda Marcos.

- This is only a foretaste of what is to come, and only the shadow of what is going to be. We have to have some experience with the machine before we really know its capabilities. It may take years before we settle down to the new possibilities, but I do not see why it should not enter any one of the fields normally covered by the human intellect, and eventually compete on equal terms.

- 'The Mechanical Brain. Answer Found to 300 Year Old Problem' The Times newspaper, 11 June 1949 page 4 column 5.

- The sentence in bold appears on the latest British £50 bank note featuring Alan Turing which was released on 23 June 2021 on what would have been his 109th birthday.

- Science is a differential equation. Religion is a boundary condition.

- Epigram to Robin Gandy (1954); reprinted in Andrew Hodges, Alan Turing: the Enigma (Vintage edition 1992), p. 513.

- The Exclusion Principle is laid down purely for the benefit of the electrons themselves, who might be corrupted (and become dragons or demons) if allowed to associate too freely.

- Epigram to Robin Gandy (1954).

- Let us now assume, for the sake of argument, that these machines are a genuine possibility, and look at the consequences of constructing them. To do so would of course meet with great opposition, unless we have advanced greatly in religious toleration from the days of Galileo. There would be great opposition from the intellectuals who were afraid of being put out of a job. It is probable though that the intellectuals would be mistaken about this. There would be plenty to do, trying to understand what the machines were trying to say, i.e. in trying to keep one’s intelligence up to the standard set by the machines, for it seems probable that once the machine thinking method had started, it would not take long to outstrip our feeble powers. There would be no question of the machines dying, and they would be able to converse with each other to sharpen their wits. At some stage therefore we should have to expect the machines to take control, in the way that is mentioned in Samuel Butler’s “Erewhon”.

- "Intelligent Machinery, A Heretical Theory" (1951), from a BBC radio discussion programme, The ’51 Society.

On Computable Numbers, with an Application to the Entscheidungsproblem (1936)[edit]

- Proceedings of the London Mathematical Society, Vol. 2-42, Issue 1, pp. 230-265. Note: also see Turing machine & Turing's proof.

- The "computable" numbers may be described briefly as the real numbers whose expressions as a decimal are calculable by finite means. ...According to my definition, a number is computable if its decimal can be written down by a machine. ...I show that certain large classes of numbers are computable. They include, for instance, the real parts of all algebraic numbers, the real parts of the zeros of the Bessel functions, the numbers π, e, etc. The computable numbers do not, however, include all definable numbers. ...[C]onclusions are reached which are superficially similar to those of Gödel. ...[I]t is shown ...that the Hilbertian Entscheidungsproblem can have no solution. In a recent paper Alonzo Church... reaches similar conclusions...

- We may compare a man in the process of computing a real number to a machine which is only capable of a finite number of conditions q1, q2, ..., qK which will be called " m-configurations ".

- The machine is supplied with a "tape" (the analogue of paper) running through it, and divided into sections (called "squares") each capable of bearing a "symbol".

- The "scanned symbol" is the only one of which the machine is... "directly aware". However, by altering its m-configuration the machine can effectively remember some of the symbols which it has "seen" (scanned) previously.

- In some of the configurations in which the scanned square is blank... the machine writes down a new symbol on the scanned square: in other configurations it erases the scanned symbol.

- The machine may also change the square which is being scanned, but only by shifting it one place to right or left.

- [T]he m-configuration may be changed.

- Some of the symbols written down will form the sequence of figures which is the decimal of the real number... being computed. The others are just rough notes to "assist the memory ". ...[O]nly ...these rough notes ...will be liable to erasure.

- [T]hese operations include all those which are used in the computation...

- For some purposes we might use machines (choice... or c-machines) whose motion is only partially determined by the configuration... When such a machine reaches... ambiguous configurations, it cannot go on until some arbitrary choice has been made by an external operator. This would be the case if we were using machines to deal with axiomatic systems.

- In this paper I deal only with automatic machines, and will therefore often omit the prefix ɑ-.

- If an ɑ-machine prints two kinds of symbols, of which the first kind (called figures) consists entirely of 0 and 1 (the others being called symbols of the second kind), then the machine will be called a computing machine.

- It will be useful to put... tables into a... standard form. ...The lines of the table are... of form

m-config. | Symbol | Operations | Final m-config.

In this way we obtain a complete description of the machine. ...This new description of the machine may be called the standard description (S.D). ...[W]e shall have a description of the machine in the form of an arabic numeral. The integer represented by this numeral may be called a description number (D.N) of the machine. The D.N determine the S.D and the structure of the machine uniquely. The machine whose D.N is n may be described as (n).

- To each computable sequence there corresponds at least one description number, while to no description number does there correspond more than one computable sequence. The computable sequences and numbers are therefore enumerable.

- It is possible to invent a single machine which can be used to compute any computable sequence. If this machine is supplied with a tape on the beginning of which is written the S.D of some computing machine , then will compute the same sequence as .

Computing Machinery and Intelligence (1950)[edit]

- Published in Mind – A Quarterly Review of Psychology and Philosophy, vol. 59, #236 (1950). This paper describes what has come to be known as the Turing Test. At the time it was written, the term "computer" was a job title describing an individual who processed figures by hand. - Full text online

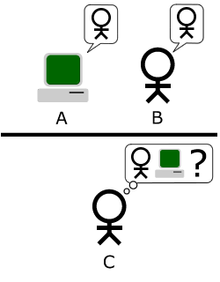

- "Can machines think?"... The new form of the problem can be described in terms of a game which we call the 'imitation game." It is played with three people, a man (A), a woman (B), and an interrogator (C) who may be of either sex. The interrogator stays in a room apart from the other two. The object of the game for the interrogator is to determine which of the other two is the man and which is the woman. He knows them by labels X and Y, and at the end of the game he says either "X is A and Y is B" or "X is B and Y is A." The interrogator is allowed to put questions to A and B... We now ask the question, "What will happen when a machine takes the part of A in this game?" Will the interrogator decide wrongly as often when the game is played like this as he does when the game is played between a man and a woman? These questions replace our original, "Can machines think?"

- We do not wish to penalise the machine for its inability to shine in beauty competitions, nor to penalise a man for losing in a race against an aeroplane. The conditions of our game make these disabilities irrelevant.

- May not machines carry out something which ought to be described as thinking but which is very different from what a man does?

- We are not asking whether all digital computers would do well in the game nor whether the computers at present available would do well, but whether there are imaginable computers which would do well.

- The idea behind digital computers may be explained by saying that these machines are intended to carry out any operations which could be done by a human computer.

- p. 436.

- A digital computer can usually be regarded as consisting of three parts: (i) Store. (ii) Executive unit. (iii) Control. ...The executive unit is the part which carries out the various individual operations involved in a calculation. ...It is the duty of the control to see that...[the table of] instructions are obeyed correctly and in the right order. ...A typical instruction might say—"Add the number stored in position 6809 to that in 4302 and put the result back into the latter storage position." Needless to say it would not occur in the machine expressed in English. It would more likely be coded in a form such as 6809430217. Here 17 says which of various possible operations [add] is to be performed on the two numbers. ...It will be noticed that the instruction takes up 10 digits and so forms one packet of information...

- Suppose Mother wants Tommy to call at the cobbler's every morning on his way to school to see if her shoes are done, she can ask him afresh every morning. Alternatively she can stick up a notice once and for all in the hall which he will see when he leaves for school and which tells him to call for the shoes, and also to destroy the notice when he comes back if he has the shoes with him.

- If one wants to make a machine mimic the behaviour of the human computer in some complex operation one has to ask him how it is done, and then translate the answer into the form of an instruction table. Constructing instruction tables is usually described as "programming."

- I believe that at the end of the century the use of words and general educated opinion will have altered so much that one will be able to speak of machines thinking without expecting to be contradicted.

- p. 442.

- I am not very impressed with theological arguments whatever they may be used to support. Such arguments have often been found unsatisfactory in the past. In the time of Galileo it was argued that the texts, "And the sun stood still... and hasted not to go down about a whole day" (Joshua x. 13) and "He laid the foundations of the earth, that it should not move at any time" (Psalm cv. 5) were an adequate refutation of the Copernican theory.

- pp. 443-444.

- Machines take me by surprise with great frequency.

- p. 450.

- The view that machines cannot give rise to surprises is due, I believe, to a fallacy to which philosophers and mathematicians are particularly subject. This is the assumption that as soon as a fact is presented to a mind all consequences of that fact spring into the mind simultaneously with it. It is a very useful assumption under many circumstances, but one too easily forgets that it is false. A natural consequence of doing so is that one then assumes that there is no virtue in the mere working out of consequences from data and general principles.

- p. 451.

- Another simile would be an atomic pile of less than critical size: an injected idea is to correspond to a neutron entering the pile from without. Each such neutron will cause a certain disturbance which eventually dies away. If, however, the size of the pile is sufficiently increased, the disturbance caused by such an incoming neutron will very likely go on and on increasing until the whole pile is destroyed. Is there a corresponding phenomenon for minds, and is there one for machines? There does seem to be one for the human mind. The majority of them seem to be "sub-critical," i.e., to correspond in this analogy to piles of sub-critical size. An idea presented to such a mind will on average give rise to less than one idea in reply. A smallish proportion are super-critical. An idea presented to such a mind may give rise to a whole "theory" consisting of secondary, tertiary and more remote ideas. Animals minds seem to be very definitely sub-critical. Adhering to this analogy we ask, "Can a machine be made to be super-critical?"

- p. 454.

- Presumably the child-brain is something like a note-book as one buys it from the stationer's. Rather little mechanism, and lots of blank sheets.

- p. 456.

- We can only see a short distance ahead, but we can see plenty there that needs to be done.

- p. 460.

'Can digital computers think?' (1951)[edit]

- It is customary... to offer a grain of comfort, in the form of a statement that some peculiarly human characteristic could never be imitated by a machine. ... I cannot offer any such comfort, for I believe that no such bounds can be set.

- 'Can digital computers think?'. Talk broadcast on BBC Third Programme, 15 May 1951.[1]

Quotes about Turing[edit]

- Sorted alphabetically by author or source

- Alan Turing was the first to make a careful analysis of the potential capabilities of machines, inventing his famous "Turing machines" for the purpose. He argued that if any machine could perform a computation, then some Turing machine could perform it. The argument focuses on the assertion that any machine's operations could be simulated, one step at a time, by certain simple operations, and that Turing machines were capable of those simple operations. Turing's first fame resulted from applying this analysis to a problem posed earlier by Hilbert, which concerned the possibility of mechanizing mathematics. Turing showed that in a certain sense, it is impossible to mechanize mathematics: We shall never be able to build an "oracle" machine that can correctly answer all mathematical questions presented to it with a "yes" or "no" answer. In another famous paper Turing went on to consider the somewhat different question, "Can machines think?." It is a different question, because perhaps machines can think, but they might not be any better at mathematics than humans are; or perhaps they might be better at mathematics than humans are, but not by thinking, just by brute-force calculation power. These two papers of Turing lie near the roots of the subjects today known as automated deduction and artificial intelligence.

- Michael J. Beeson, "The Mechanization of Mathematics," in Alan Turing: Life and Legacy of a Great Thinker (2004).

- I’d keep hearing: 'Oh, Alan Turing? Wasn’t he something to do with Bletchley Park? Didn’t he create the Apple logo? Didn’t he bite an apple?' But not many people know the whole story, that he invented the computer, broke the Enigma code, was prosecuted for being a homosexual and underwent a year of oestrogen injections before dying in 1954. The irony and sickness of it was extraordinary! He wasn’t shouting from the rooftops or trying to start a cause, he was just a man who was gay. He’s such a quiet hero.

- Benedict Cumberbatch, "Benedict Cumberbatch interview" in TimeOut (21 November 2016)

- Beyond any doubt, the most important thing that has happened in cognitive science was Turing’s invention of the notion of mechanical rationality.

- Jerry Fodor, "The Trouble with Psychological Darwinism" London Review of Books, Vol. 20 No. 2, 22 January 1998, pp.11-13.

- And if the physicists who understood its potential had not told their generals and politicians, these would certainly have remained in ignorance, unless they were themselves postgraduate physicists, which was very unlikely. Again, Alan Turing's celebrated paper of 1935, which was to provide the foundation of modern computer theory, was originally written as a speculative exploration for mathematical logicians. The war gave him and others the occasion to translate theory into the beginnings of practice for the purpose of code-breaking, but when it appeared nobody except a handful of mathematicians even read, let alone took notice of Turing's paper. Even in his own college this clumsy-looking pale-faced genius, then a junior fellow with a taste for jogging, who posthumously became a sort of icon among homosexuals, was not a figure of any prominence; at least I do not remember him as such.

- Eric Hobsbawm, Age of Extremes (1994), Chap. 18 : Sorcerers and Apprentices: The Natural Sciences

- Actually, with one alleged exception, the links of King’s dons with intelligence were with the British rather than the Soviet secret services. Kingsmen, headed by the small, roly-poly later professor of ancient history, F. E. Adcock, had set up the British codebreaking establishment in the First World War, and at least seventeen King’s dons were recruited by Adcock for the much more famous establishment at Bletchley during the Second World War, including probably the only genius at King’s in my undergraduate years, the mathematical logician Alan Turing, whom I recall as a clumsy-looking, pale-faced young fellow given to what would today be called jogging.

- Eric Hobsbawm, Interesting Times (2002), Chap. 7 : Cambridge

- From a very young age, I knew about the legend of Alan Turing – among awkward, nerdy teenagers, he is a patron saint. He never fit in, but accomplished these wonderful things, as part of a secret queer history of computer science.

And so I always dreamt of writing something about him, and I thought that there had never been a proper narrative treatment of his life, that he deserved. I by chance met the producers of the film at a party, and one of them told me they had optioned a biography. When I asked who it was, they said, ‘it’s a mathematician that you’ve never heard of.’ When they told me it was Alan Turing, I almost tackled them, and I told them I’d do anything to write this film, I’d write it for free. It was all about luck and passion.

- Here’s the thing. Alan Turing never got to stand on a stage like this and look out at all of these disconcertingly attractive faces. I do. And that’s the most unfair thing I’ve ever heard. So in this brief time here, what I wanted to do was say this: When I was 16-years-old, I tried to kill myself because I felt weird and I felt different, and I felt like I did not belong. And now I’m standing here — and so I would like this moment to be for this kid out there who feels like she’s weird or she’s different or she doesn’t fit in anywhere: Yes, you do. I promise you do. Stay weird, stay different — and then, when it’s your turn, and you are standing on this stage, please pass the same message to the next person who comes along.

- Graham Moore, in his acceptance speech for best adapted screenplay at the 87th Academy Awards presentations, quoted in "Oscars 2015: Graham Moore Tells Kids to 'Stay Weird, Stay Different'" in ABC News (22 February 2015).

- I’m not gay, but I don’t think you have to be gay to have a gay hero. Growing up, Alan Turing was certainly mine. … I’m also not the greatest mathematician of my generation. We have lots of biographical differences, but nonetheless I always identified with him so much.

- His high-pitched voice already stood out above the general murmur of well-behaved junior executives grooming themselves for promotion within the Bell corporation. Then he was suddenly heard to say: "No, I'm not interested in developing a powerful brain. All I'm after is just a mediocre brain, something like the President of the American Telephone and Telegraph Company."

- Andrew Hodges, describing an incident which occurred in the New York AT & T lab cafeteria in 1943, in Alan Turing: The Enigma of Intelligence (1983), p. 251.

- He wondered what one might ask in a structured conversation to decide if one's interlocutor was a human being or a computer. ...but the ultimate Turing test might be to pose the question "How would a guilt-stricken homosexual commit suicide?" Would a computer ever conceive of eating an apple laced with cyanide?

- Theodore Roszak, The Gendered Atom (1999).

- Although a mathematician, Turing took quite an interest in the engineering side of computer design. There was some discussion in 1947 as to whether a cheaper substance than mercury could not be found for use as an ultrasonic delay medium. Turing's contribution to this discussion was to advocate the use of gin, which he said contained alcohol and water in just the right proportions to give a zero temperature coefficient of propagation velocity at room temperature.

- Maurice V. Wilkes, "Computers Then and Now", Journal of the ACM 15 (1), (January 1968), pp. 1-7.

- Turing had a strong predeliction for working things out from first principles, usually in the first instance without consulting any previous work on the subject, and no doubt it was this habit which gave his work that characteristically original flavor. I was reminded of a remark which Beethoven is reputed to have made when he was asked if he had heard a certain work of Mozart which was attracting much attention. He replied that he had not, and added "neither shall I do so, lest I forfeit some of my own originality."

- James H. Wilkinson, "Some Comments from a Numerical Analyst", 1970 Turing Award lecture, Journal of the ACM 18:2 (February 1971), pp. 137–147.

- He was particularly fond of little programming tricks (some people would say that he was too fond of them to be a "good" programmer) and would chuckle with boyish good humor at any little tricks I may have used.

- James H. Wilkinson, "Some Comments from a Numerical Analyst", 1970 Turing Award lecture, Journal of the ACM 18:2 (February 1971), pp. 137–147.

See also[edit]

References[edit]

External links[edit]

Encyclopedic article on Alan Turing on Wikipedia

Encyclopedic article on Alan Turing on Wikipedia Media related to Alan Turing on Wikimedia Commons

Media related to Alan Turing on Wikimedia Commons Works related to Author:Alan Turing on Wikisource

Works related to Author:Alan Turing on Wikisource- Time 100: Alan Turing

- Stanford Encyclopedia of Philosophy entry

- Profile at the University of St. Andrews

- Profile at Turing.org

- AlanTuring.net - Turing Archive for the History of Computing

- The Turing Digital Archive

- Alan Turing - Towards a Digital Mind: Part 1

Categories:

- 1912 births

- 1954 deaths

- People from London

- Academics from the United Kingdom

- Philosophers from England

- Computer scientists from the United Kingdom

- Cryptographers

- Cyberneticists

- Mathematicians from England

- Logicians from England

- Biologists from England

- Inventors

- Designers

- LGBT people

- Atheists from England

- Anti-fascists

- Suicides

- Princeton University alumni

- University of Cambridge alumni

- Fellows of the Royal Society